Machines that Judge Us

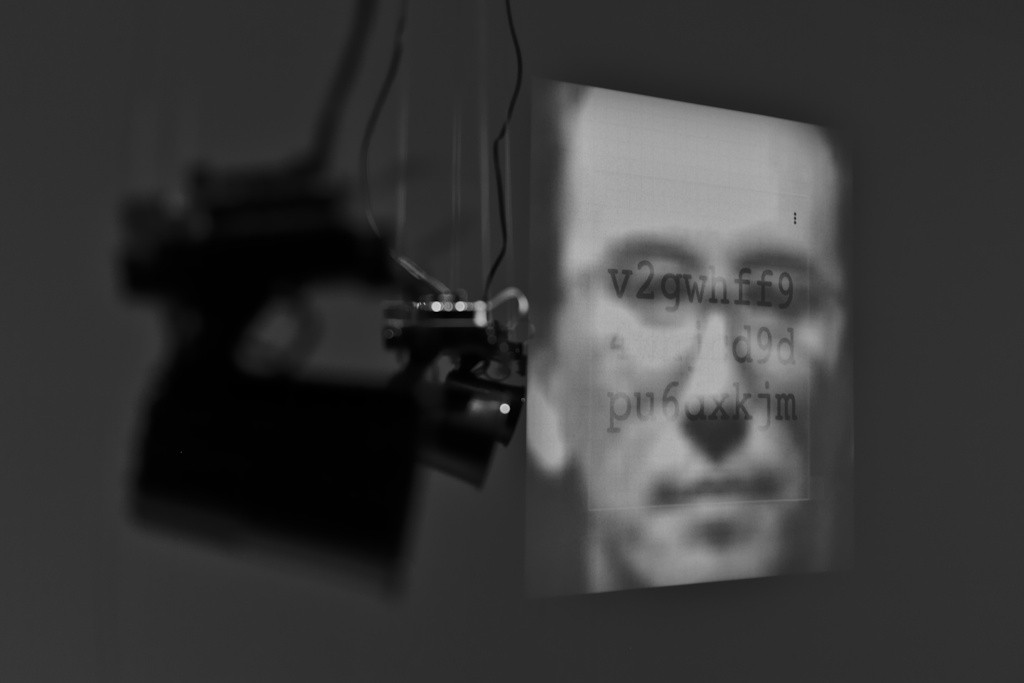

How would machines make us feel if we could give a human voice to their algorithmic logic?

By attempting to answer this question this project explores our relationship to algorithms, surveillance, and inherently biased systems.

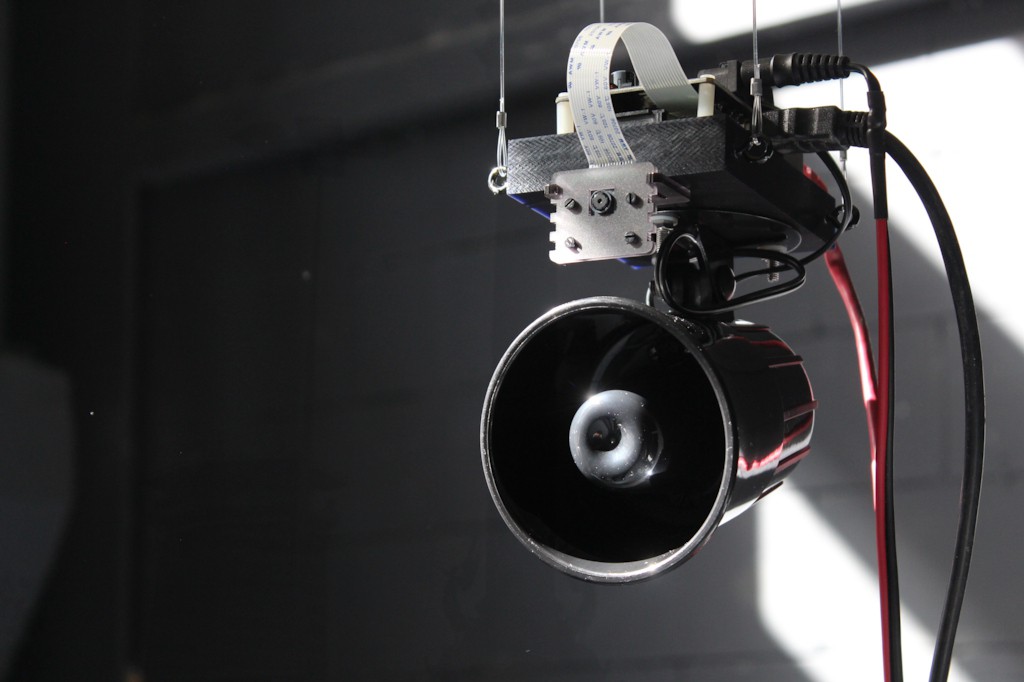

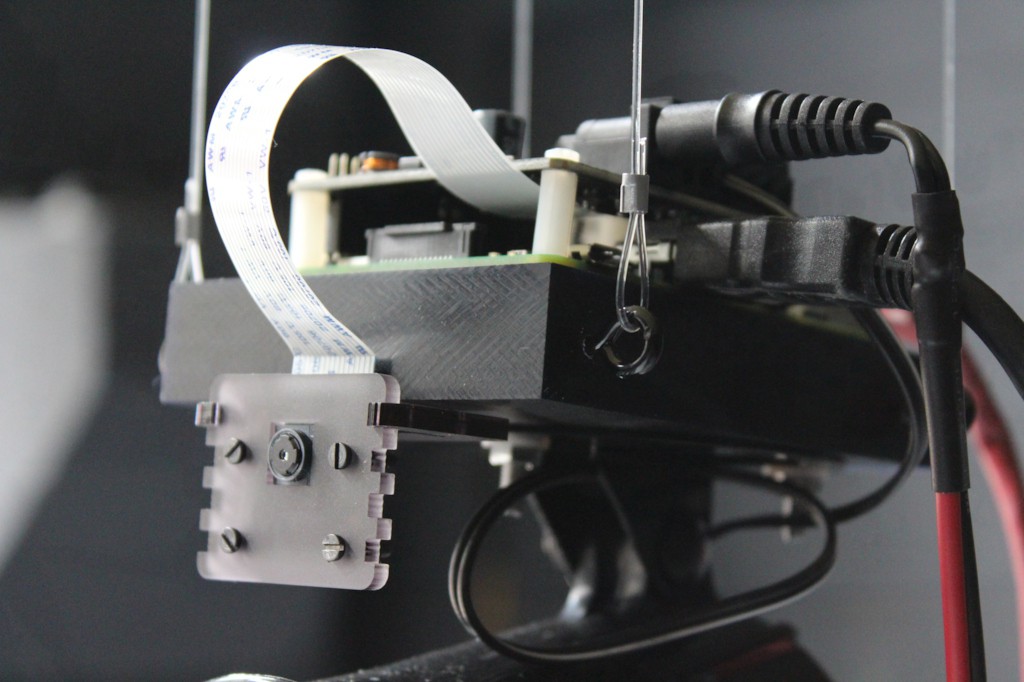

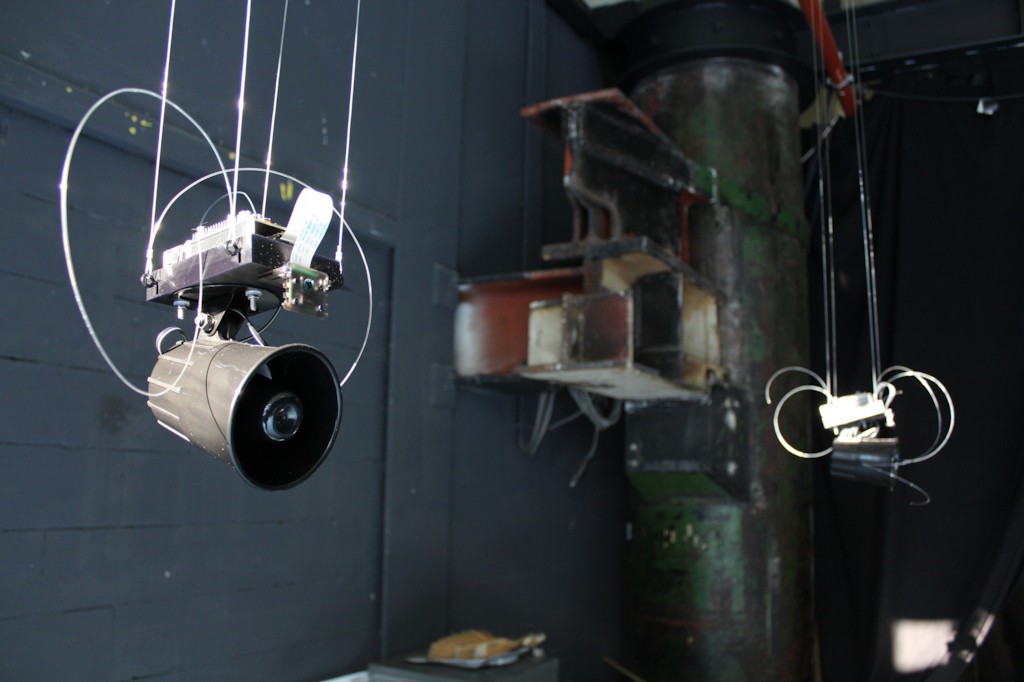

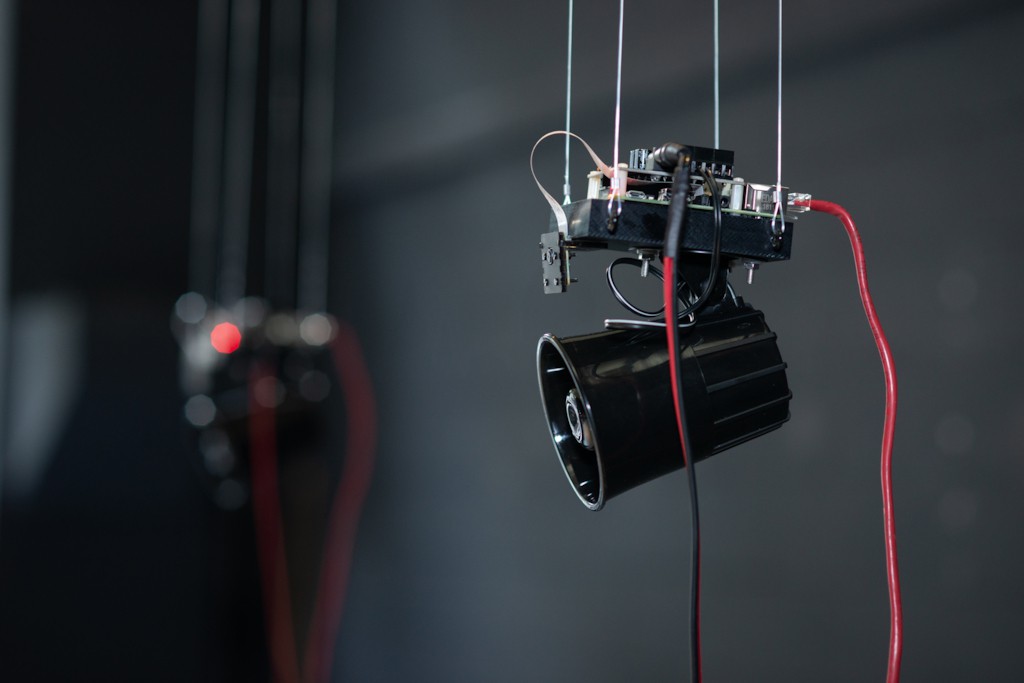

A series of small, identical, machines observe humans moving within a space. One person becomes their focus once a machine singles them out. Each, then, makes a judgement on how valuable that person would be, purely based on visual appearance. If deemed valuable, they talk about how they want to exploit this human being. If deemed worthless they talk about why that is the case, and how they would rid themselves of this person as soon as possible.

Background

This creates the unfortunate situation where algorithms make consistently, and with false confidence, invalid decisions about and on behalf of their users. As these decisions are being made invisibly and by non-human agents, the public tends to downplay or be unaware of their existence. It is the intent of this project to make the nature of these algorithms more easily apparent and understood.

With Support From

- Summer Sessions @ V2_ Lab for the Unstable Media

- Production Network for Electronic Art PNEK